|

Finally! Weather was a little terrible but we finally went out and collected our data sets today! The goal for today was to collect the data sets that we would need to begin building our terrain classifier. The data sets we wanted to collect were:

We were hoping to go out last Friday but equipment issues plagued us once again. However, not today! Today the hardware worked fine so we trucked out to Parcel B and collected data. I'll start by showing some in-the-moment photos and then the images from the dataset So, now here are some photos from the dataset With this data collected, we will now turn our attention to focus on procesing the data and trying to find classifiers that will be able to successfully classify terrain in real time.

So this week we got back our generators from the service place which means we're back in business in terms of being able to conduct field testing! So we thought... Turns out, our FPGA code was being a little silly and decided it was out of date compared to the bitfiles on the FPGA and so refused to connect. So, we were again delayed in data collection. =( In any case, we built a new gravel patch for the "rocky terrain" data collection and that'll be the pictures for this week: So that is, unfortunately, our accomplishment for the week. We have done some python work to get some data pipeline stuff written but that's largely dry and nitty gritty work. Hopefully we'll have the FPGA issue sorted out by Sunday and be able to collect data on Sunday (as long as the forecasted rain is done and gone by the forecasted time)

So I decided to double post this week to make up for the lack of posting in the past. I'll start with what work has been done since the last update! The navigation team has started building a ROS-LabVIEW interface so that the lower-level LabVIEW code can interface with the higher-level ROS code that will handle path planning among other high-level functions. So the navigation team is chugging away on that. Today, the terrain classification team managed to collect their first set of data! In order to avoid being held up by the lack of a generator, we built used very very simple terrain that already existed and then built ourselves a little rock patch to scan to see if we could classify rocky terrain. The LIDAR and camera data has now been collected for that so we will proceed to process that data and see what we can make of it in terms of terrain classification. For reference, here are a couple of sample images from our data sets: I also realized that I have never posted actual pictures of the vehicle. So, here's a couple of pictures of the vehicle! And finally, to end off, here's a much clearer video of another brief drive-by-wire test we did today! Another big thing we achieved this week was sensor characterization! Our advisor made us realize that we really should better understand where our sensors were taking measurements before we began collecting data. So, to better understand our sensors, we decided to tape out all our sensor reading locations on the ground! So, the vehicle has 2 SICK LMS-291 LIDARs and a Point Grey Research Firefly Firewire camera on the front of the vehicle. All three sensors are meant to help the vehicle figure out what terrain it is driving over and whether there are obstacles in the path of the vehicle. We wanted to find out where the scanning planes of the two LIDARs contacted the ground and whether the view of the camera included the locations where the LIDAR scanning planes contacted the ground. So, we parked the vehicle in the middle of a stretch of roadway and started taping out the boundaries. And behold! In all three pictures, you can see two horizontal lines and a trapezoid. The trapezoid represents the boundaries of the camera image. In addition, because the LIDARs are mounted such that each LIDAR is tilted at a slightly different angle, each horizontal line represents the line along with one of the LIDARs scanning planes contacts the ground. Turns out, one of the LIDARs is contacting the ground about 15ft from the front tires and the other is contacting the ground at about 21 feet from the front tires. Moreover, both LIDAR scanning planes are well within the view of the camera. For us, this means the sensors are almost perfectly positioned! So, we're essentially good to go on data collection!

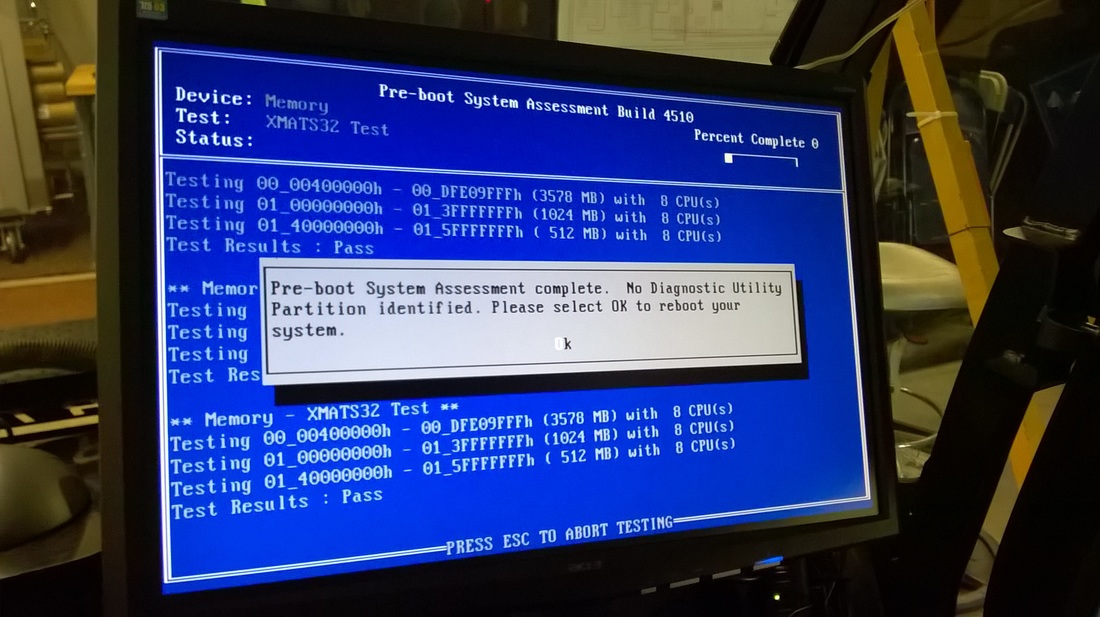

That's it! More to come in the coming weeks as we continue to make progress! If you've read the previous post I wrote, it's been almost a month since I last posted about this project. But I have good news! We're now firmly off the ground on research! This week marked the point at which we now have a known working functional base robot that we can use to continue the research! Over the last few weeks, we have struggled to get an accurate assessment of all the existing hardware and whether there is anything that needs to be replaced. However, we finally have a working vehicle that is capable of drive by wire! You'll notice in the videos that there is always someone managing a cable of some kind. That's just an umbilical cord we have to power the electronics bay. Typically, the vehicle would have a generator running in the cargo bay to power the electronics bay. However, we recently ran into problems getting the generator to run so for these tests, we were relying on building power to keep the electronics bay running. You'll also notice that the driving is a little jerky and that's because we're all still new to the concept of driving a vehicle by wire. Turns out, when you close the loops on velocity control in an FPGA and only expose the desired speed parameter, controlling that parameter using the trigger control on an XBOX controller is not the easiest way to control a vehicle! So we'll be updating that control scheme so that you use the upper trigger buttons instead of the lower trigger button to control speed. This way, the left and right buttons could be used to increment or decrement the desired speed, kind of like how one would change the speed of a car when it is in cruise control. That's it! More in another post to come about sensor characterization! The Fall semester at Olin has just started and my team and I have hit the ground running in terms of getting research started as quickly as possible! As you may have gleamed from the description on the right hand side of this blog, the aim of this research will be to write the software required to allow a John Deere Gator to navigate a mini-baja-type course autonomously and adjust its driving to suit the various types of terrain it may encounter. To do this, we will be modifying and upgrading an old research platform developed in 2010. So, to start this research, we had begin by undusting the old John Deere Gator from the depths of storage and performing a "robotic archaeological dig" of sorts to figure out what still works and what doesn't. As one might imagine, this process is never easy and problems showed up right from the moment we tried to retrieve the Gator at all. When we tried to start the engine, the engine wouldn't start and so we had to perform a recovery operation to haul the Gator back up to the Project Building at Olin where we could work on it in a comfortable environment. Given that the project is software focused and we didn't build the vehicle, we left the engine troubleshooting to a service call to John Deere and proceeded to inspect the onboard sensors and electronics. In doing our inspection, we reconnected wires that had been mysteriously unplugged and discovered that the server had a hardware malfunction that needed to be diagnosed. Given this and the fact that the NI chassis are all relatively outdated components, we decided that beginning hardware upgrades first instead of trying to restore vehicle functionality would be the logical way to proceed. So, one of the first major tasks this coming will be upgrading the two NI chassis on board the Gator and then slowly trying to bring the various sensors online and confirm that we can still retrieve data from them. The goal will be to have tested at least the LIDARs and wheel encoders by the end of the week.

In addition to the hardware upgrades, this coming week, we will also be laying out a rough roadmap for how the research will proceed and then hopefully officially kick off this research project! So that was the work this week! Didn't get a ton done work wise but we have made strides in understanding how the electronics were wired and the vehicle is now very much cleaner than when we first dug the vehicle out! |

Gator Research BlogWelcome to the Gator Research Blog for the Fall 2015 semester! The aim of this research will be to develop the software to allow a John Deere Gator to navigate a mini-baja-type course autonomously. Follow our progress here! |